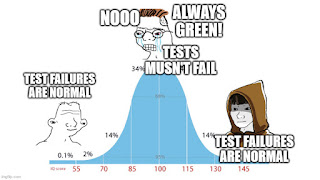

Automated testing of software is great. Unfortunately, what's commonly considered best practice for how to integrate testing into the development flow is a bad fit for a lot of software. You know what I mean: You submit a pull request, some automated testing process is kicked off in the background, and some time later you get back a result that's either Green (all tests passed) or Red (at least one test failed). Don't submit the PR if the tests are red. Sounds good, doesn't quite work.

There is Software Under Test (SUT), the software whose development is the goal of what you're doing, and there's the Test Bench (TB): the tests themselves, but also additional relevant parts of the environment like perhaps some hardware device that the SUT is run against.

The above development practice works well when the SUT and TB are both defined by the same code repository and are developed together. And admittedly, that is the case for a lot of useful software. But it just so happens that I mostly tend to work on software where the SUT and TB are inherently split. Graphics drivers and shader compilers implement some spec (like Vulkan or Direct3D), and an important part of the TB are a conformance test suite and other tests, the bulk of which are developed separately from the driver itself. Not to mention the GPU itself and other software like the kernel mode driver. The point is, TB development is split from the SUT development and it is infeasible to make changes to them in lockstep.

Down with No Failures, long live No Regressions

Problem #1 with keeping all tests passing all the time is that tests can fail for reasons whose root cause is not an SUT change.

For example, a new test case is added to the conformance test suite, but that test happens to fail. Suddenly nobody can submit any changes anymore.

That clearly makes no sense, and because Tooling Sucks(tm), what folks typically do is maintain a manual list of test cases that are excluded from automated testing. This unblocks development, but are you going to remember to update that exclusion list? Bonus points if the exclusion list isn't even maintained in the same repository as the SUT, which just compounds the problem.

The situation is worse when you bring up a large new feature or perhaps a new version of the hardware supported by your driver (which is really just a super duper large new feature), where there is already a large body of tests written by somebody else. Development of the new feature may take months and typically is merged bit by bit over time. For most of that time, there are going to be some test failures. And that's fine!

Unfortunately, a typical coping mechanism is that automated testing for the feature is entirely disabled until the development process is complete. The consequences are dire, as regressions in relatively basic functionality can go unnoticed for a fairly long time.

And sometimes there are simply changes in the TB that are hard to control. Maybe you upgraded the kernel mode driver for your GPU on the test systems, and suddenly some weird corner case tests fail. Yes, you have to fix it somehow, but removing the test case from your automated testing process is almost always the wrong response.

In fact, failing tests are, given the right context, a good thing! Let's say a bug is discovered in a real application in the field. Somebody root causes the problem and writes a simplified reproducer. This reproducer should be added to the TB as soon as possible, even if it is going to fail initially!

To be fair, many of the common testing frameworks recognize this by allowing tests to be marked as "expected to fail". But they typically also assume that the TB can be changed in lockstep with the SUT and fall on their face when that isn't the case.

What is needed here is to treat testing as a truly continuous exercise, with some awareness by the automation of how test runs relate to the development history.

During day-to-day development, the important bit isn't that there are no failures. The important bit is that there are no regressions.

Automation ought to track which tests pass on the main development branch and provide pre-commit reports for pull requests relative to those results: Have there been any regressions? Have any tests been fixed? Block code submissions when they cause regressions, but don't block them for pre-existing failures, especially when those failures are caused by changes in the TB.

Changes to the TB should also be tested where possible, and when they cause regressions those should be investigated. But it is quite common that regressions caused by a TB change are legitimate and shouldn't block the TB change.

Sparse testing

Problem #2 is that good test coverage means that tests take a very long time to run.

Your first solution to this problem should be to parallelize and throw more hardware at it. Let's hope the people who control the purse care enough about quality.

There is sometimes also low-hanging fruit you should pick, like wasting lots of time in process (or other) startup and teardown overhead. Addressing that can be a double-edged sword. Changing a test suite from running every test case in a separate process to running multiple test cases sequentially in the same process reduces isolation between the tests and can therefore make the tests flakier. It can also expose genuine bugs, though, and so the effort is usually worth it.

But all these techniques have their limits.

Let me give you an example. Compilers tend to have lots of switches that subtly change the compiler's behavior without (intentionally) affecting correctness. How good is your test coverage of these?

Chances are, most of them don't see any real testing at all. You probably have a few hand-written test cases that exercise the options. But what you really should be doing is run an entire suite of end-to-end tests with each of the switches applied to make sure you aren't missing some interaction. And you really should be testing all combinations of switches as well.

The combinatorial explosion is intense. Even if you only have 10 boolean switches, testing them each individually without regressing the turn-around time of the test suite requires 11x more test system hardware. Testing all possible combinations requires 1024x more. Nobody has that kind of money.

The good news is that having extremely high confidence in the quality of your software doesn't require that kind of money. If we run the entire test suite a small number of times (maybe even just once!) and independently choose a random combination of switches for each test, then not seeing any regressions there is a great indication that there really aren't any regressions.

Why is that? Because failures are correlated! Test T failing with a default setting of switches is highly correlated with test T failing with some non-default switch S enabled.

This effect isn't restriced to taking the cross product of a test suite with a bunch of configuration switches. By design, an exhaustive conformance test suite is going to have many sets of tests with high failure correlation. For example, in the Vulkan test suite you might have a bunch of test cases that all do the same thing, but with a different combination of framebuffer format and blend function. When there is a regression affecting such tests, the specific framebuffer format or blend function might not matter at all, and all of the tests will regress. Or perhaps the regression is related to a specific framebuffer format, and so all tests using that format will regress regardless of the blend function that is used, and so on.

A good automated testing system would leverage these observations using statistical methods (aka machine learning).

Combinatorial explosion causes your full test suite to take months to run? No problem, treat testing as a genuinely continuous task. Have test systems continuously run random samplings of test cases on the latest version of the main development branch. Whenever a change is made to either the SUT or TB, switch over to testing that new version instead. When a failure is encountered, automatically determine if it is a regression by referring to earlier test results (of the exact same test if it has been run previously, or related tests otherwise) combined with a bisection over the code history.

Pre-commit testing becomes an interesting fuzzy problem. By all means have a small traditional test suite that is manually curated to run within a few minutes. But we can also apply the approach of running randomly sampled tests to pre-commit testing.

A good automated testing system would learn a statistical model of regressions and combine that with the test results obtained so far to provide an estimate of the likelihood of regression. As long as no regression is actually found, this likelihood will keep dropping as more tests are run, though it will not drop to 0 unless all tests are run (and the setup here was this would take months). The team can define a likelihood threshold that a change must reach before it can be committed based on the their appetite for risk and rate of development.

The statistical model should be augmented with source-level information about the change, such as keywords that appear in the diff and commit message and the set of files that was changed. After all, there ought to be some meaningful correlation between regressions in a raytracing test case and the fact that the regressing change affected a file with "raytracing" in its name. The model should then also be used to bias the random sampling of tests to be run to maximize the information extracted per effort spent on running test cases.

Some caveats

What I've described is largely motivated by the fact that the world is messier than commonly accepted testing "wisdom" allows. However, the world is too messy even for what I've described.

I haven't talked about flaky (randomly failing) tests at all, though a good automated testing system should be able to cope with them. Re-running a test in the same configuration is not black magic and can be used to confirm that a test is flaky. If we wanted to get fancy, we could even estimate the failure probability and treat a significant increase of the failure rate as a regression!

Along similar lines, there can be state leakage between test cases that causes failures only when test cases are run in a specific order, or when specific test cases are run in parallel. This would manifest as flaky tests, and so flaky test detection ought to try to help tease out these scenarios. That is admittedly difficult and will probably never be entirely reliable. Luckily, it doesn't happen often.

Sometimes, there are test cases that can leave a test system in such a broken state that it has to be rebooted. This is not entirely unusual in very early bringup of a driver for new hardware, when even the device's firmware may still be unstable. An automated test system can and should treat this case just like one would treat a crashing test process: Detect the failure, perhaps using some timer-based watchdog, force a reboot, possibly using a remote-controlled power switch, and resume with the next test case. But if a decent fraction of your test suite is affected, the resulting experience isn't fun and there may not be anything your team can do about it in the short term. So that's an edge case where manual exclusion of tests seems legitimate.

So no, testing perfection isn't attainable for many kinds of software projects. But even relative to what feels like it should be realistically attainable, the state of the art is depressing.

Keine Kommentare:

Kommentar veröffentlichen